[J10] Segmentation of Points in the Future: Joint Segmentation and Prediction of a Point Cloud

Abstract

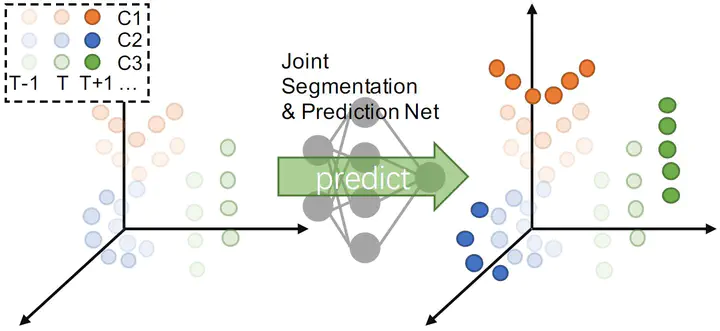

Recognizing and predicting future three-dimensional (3D) scenes are crucial steps for real-time vision-based control systems, as these steps enable them to react appropriately in advance. In this study, a method for predicting the position of a 3D point cloud in the future and simultaneously segmenting the predicted point cloud is proposed for the first time. The prediction and segmentation tasks are performed by a novel neural network architecture that extracts both local geometric features and flow features for joint segmentation and prediction. Furthermore, we propose a new evaluation metric for future point cloud segmentation to resolve the problem of inconsistency in the order of future point clouds. The results of experiments conducted using real-world large-scale benchmark datasets revealed that the proposed network achieves higher prediction and segmentation accuracy than other baseline methods.