[C30] A Splittable DNN-Based Object Detector for Edge-Cloud Collaborative Real-Time Video Inference

Abstract

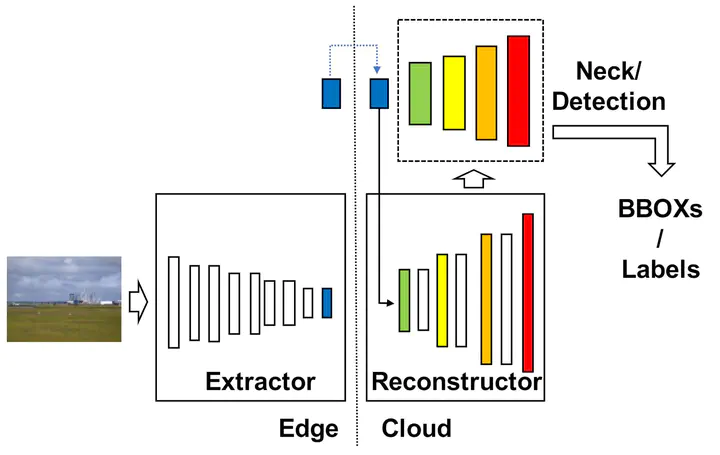

While recent advances in deep neural networks (DNNs) enabled remarkable performance on various computer vision tasks, it is challenging for edge devices to perform real-time inference of complex DNN models due to their stringent resource constraint. To enhance the inference throughput, recent studies proposed collaborative intelligence (CI) that splits DNN computation into edge and cloud platforms, mostly for simple tasks such as image classification. However, for general DNN-based object detectors with a branching architecture, CI is highly restricted because of a significant feature transmission overhead. To solve this issue, this paper proposes a splittable object detector that enables edge-cloud collaborative real-time video inference. The proposed architecture includes a feature reconstruction network that can generate multiple features required for detection using a small-sized feature from the edge-side extractor. Asymmetric scaling on the feature extractor and reconstructor further reduces the transmitted feature size and edge inference latency, while maintaining detection accuracy. The performance evaluation using Yolov5 shows that the proposed model achieves 28 fps (2.45X and 1.56X higher than edge-only and cloud-only inference, respectively), on the NVIDIA Jetson TX2 platform in WiFi environment.