[C38] An Efficient Systolic Array with Variable Data Precision and Dimension Support

Abstract

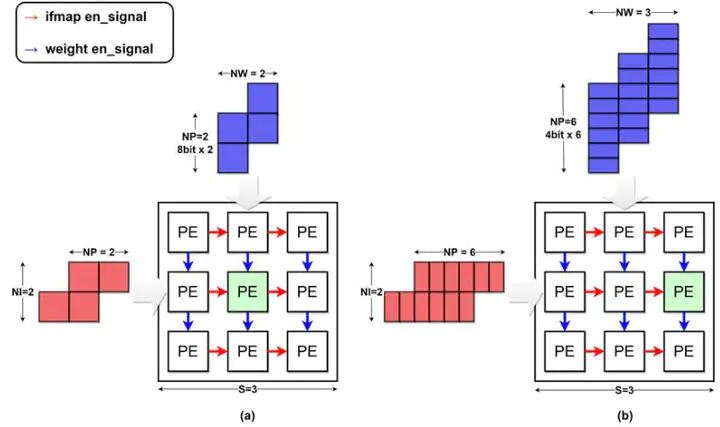

Systolic array (SA) architectures have been widely employed in deep learning accelerators with their high computation efficiency. However, the fixed SA structure leads to inefficiency in performance and power when computing variable input dimension and precision. To resolve this drawback, this work proposes a flexible systolic array architecture with variable data precision and dimension (PD-FSA) that can compute variable layers with less computing cycles. By calculating the minimum cycles required for input layers, the proposed architecture can avoid unnecessary computing cycles. We also propose to control the precision according to the layer dimension for reduced computing cycles. The experimental result shows that the proposed PD-FSA achieves up to about 2.9× reduction in the computing cycles.