[J14] Binary-Classifiers-Enabled Filters for Semi-Supervised Learning

Abstract

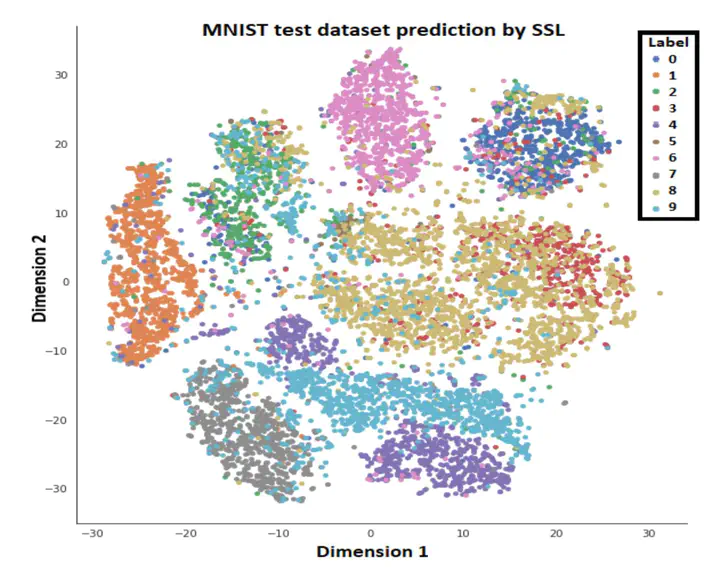

A typical semi-supervised learning-based scheme is based on training a single model for labeled data. For unlabeled data, it uses the pseudo-labeling method to obtain labels. However, the samples during pseudo-labeling are often filtered using a probability threshold, which suffers from the challenge of effective threshold selection. In the case of a high probability threshold, correct samples may not be labeled, and in the case of a low threshold, samples can be wrongly labeled. This threshold issue degrades the overall performance of the model. This paper addresses this vital issue by proposing a novel approach of SSL named Binary-Classifiers-Enabled Filters for Semi-Supervised Learning (BSSL) for labeling the unlabeled data by using binary classifiers as data filters. That is, we train binary classifiers dedicated to each class. After training, we propose three methods for labeling the unlabeled data; cascading, non-cascading, and rank-based binary classifiers. Our extensive experiment shows rank-based binary classifiers are the best choice for labeling the data. Our approach eliminates threshold selection to improve the performance of the model. Comprehensive experiments are performed to demonstrate the effectiveness of our approach on a variety of domains, including image classification, text classification and audio classification, datasets including MNIST, fashion-MNIST, EuroSat, ESC10, Free Spoken Digit dataset, Audio Emotion recognition, reuter and mice protein dataset. Rank based binary classifiers (BSSL) approach achieves absolute performance of atleast 10% and 5% over supervised learning (SL) and SSL, respectively on audio datasets in different number of sample cases except RAVDESS dataset. Moreover, BSSL shows tremendous performance on image datasets specifically when number of samples is very small. Overall, BSSL outperformed the purely supervised learning approach and SSL pseudo-labeling approaches in different number of samples cases.